Publishing: Business & Law

Publishing: Business & Law

I was at BookExpo in late May 2018 for a deep dive on trade publishing (general fiction and non-fiction) trends. I was happy to see the banners for new books – the huge poster above the registration section for Susan Orlean’s new “The Library Book” and for the relentless focus on authors throughout the fair—lines around booths for author signatures, and a lot of imprints that I wasn’t aware of even as a fairly regular consumer of such books. I also got some updates directly from panels with 3 trade house CEOs and from the leaders of 3 copyright organizations, and I’m happy to share my views and comments here.

The overall news seemed moderately positive for the books industry, and probably publishing more generally. Bookstore sales are flat to up—unit sales are up modestly—audio book recordings are growing very significantly (although from a small base). Print seems to be holding its own and even growing a bit more than e-books—I’m not completely sure that’s good news but I suspect it does help on the margin side given Amazon’s presence in the e-book market. Overall, books are resilient, and I think this speaks to an important part of our culture—books and their authors matter. It was good to hear from John Sargent (Macmillan), Carolyn Reidy (Simon & Schuster) and Markus Dohle (Penguin Random House) on these topics. Censorship and the role of publishing was also a topic of conversation, with John talking about Michael Wolff’s “Fire and Fury” book and Trump’s litigation threat (famously Macmillan’s reaction was to get the book to press more quickly than planned).

On the copyright law side, important updates on the small claims court bill and continued work on the copyright office modernization bill (including the question of whether the Copyright Office should properly sit in the executive or remain part of the legislative branch). The panel, including Maria Pallante (Association of American Publishers), Keith Kupferschmid (Copyright Alliance), and Mary Rasenberger (Authors Guild) spoke about the greater openness of the current administration to discussions about IP and copyright in terms of trade, work on anti-piracy, and the like. The panelists noted the significant amount of influence that Google had in the prior administration, often with an anti-IP bent. However these are still early days in the administration, which all agree is difficult to forecast and assess, and that the number of true copyright experts and advocates in Congress are lessening, with the departures of legislators such as Goodlatte and Hatch. Pallante spoke about the importance of balance in the legal system, Keith about the vitality of the copyright community(ies?) as represented by his organization the Alliance, and Mary spoke on the impact of piracy on book author royalties. The Guild of course is deeply involved in a program to review publisher and author contracts, as the Guild takes the view that while publishers seem to be holding the line on revenues, authors have seen their incomes decline significantly over the past 5 years (see https://www.authorsguild.org/where-we-stand/fair-contracts/), which Mary also touched on. I didn’t have the chance to ask Mary what the Guild’s view of the recently announced Authors Alliance ( a group of “academic” authors and copyright law professors) initiative on publishing contracts (see https://www.authorsalliance.org/2018/05/29/meet-the-contracts-guide-team/) which appears to be covering some of the same ground.

All in all, it felt comfortable to spend a day or so in my “publishing silo”, even with the concerns and controversies—the presence of Amazon looming over all, for example. But as a reminder that many things happen outside your comfort zone, I’ve been reading and monitoring other recent developments and controversies over the past several weeks (when I’ve been a bit distracted with home and garden projects), including:

- The IPA’s running commentary on the WIPO SCCR (Standing Committee on Copyright & Related Rights) meetings in Geneva, which also just concluded last week (see the pre-session briefing by James Taylor, PR head, at https://www.internationalpublishers.org/news/blog/entry/3-things-to-look-out-for-at-wipo-sccr-36) and see comments from national Publishers Association representatives such as Jessica Sanger (Germany, see below) and William Bowes (UK).

- Unfortunately WIPO these days seems more focused on copyright exceptions than on pro-copyright policy, and no doubt this will continue with tech industry-funded distortions playing on the “north-south” divide, perhaps playing politics around the draft broadcasting treaties which have been poised for finalization for several years now.

- The Music Modernization Act, with its “Classics” portion that would harmonize protection for pre-1972 sound recordings in the US, passed the House unanimously but now has a rival bill (“Access”) supported by some copyright law professors and anti-IP organizations (in many cases a similar list to the Authors Alliance team), which as the former RIAA EVP Neil Turkewitz notes plays into the hands of the tech industries.

- Canada is finally doing a review of the copyright revisions put into place several years ago which decimated secondary rights for authors and publishers, particularly in the educational context—John Degen from the The Writers Union of Canada (and current chair of the International Authors Forum) has been doing his usual stellar job of noting where the emperor of usage has no clothes (“freelance” or professional authors with decreasing remuneration), and raising concerns about the spread of the anti-remuneration narrative to other countries such as Australia…

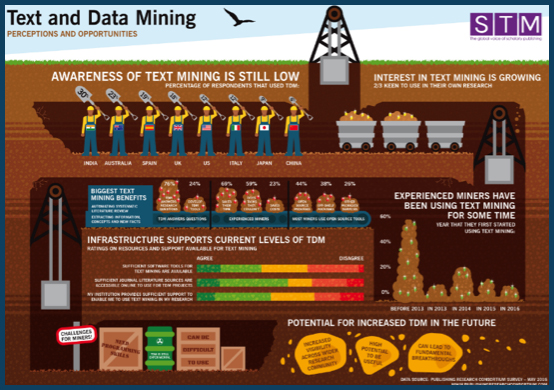

- Battles over copyright issues in Europe continue (Digital Single Market proposals), with questions raised over the news publishers’ right (which naturally Google opposes), non-authorized commercial text & data mining rights (which companies like Google and the tech industry organizations desperately want), and hopefully a bit of help on enforcement issues.

All in all, the state of publishing, authors and copyright law remain in a precarious place. Revenues are far from robust, even if they are not imploding. Authors are under enormous pressure (sometimes I must admit from tough negotiations with publishers). Piracy is rampant, both print and digital. Amazon seems to be perfectly content to discount books to a razor thin margin, presumably as a “loss leader” in its goal of selling more groceries and shoes, putting huge pressure on other distributors and retailers. Google and some of its allies seem to invent organizations and hire lobbyists, advocates and other professionals at a breathtaking speed and scale (although some copyright professors seem to resent being identified as Google-funded, as reactions to the a slew of articles on this topic from last year (e.g. https://www.wsj.com/articles/paying-professors-inside-googles-academic-influence-campaign-1499785286). Every positive or at least reasonably balanced draft law, treaty or initiative seems to be met with a remarkably quickly-organized response or counter-proposal, often with a similar anti-copyright narrative that starts with notions of huge populations of users who are deprived of access and the tech industry players that can “transform” content into search nirvana. Somehow creators are expected to continue producing, but now for the benefit of technology.

But as BookExpo shows, the book and the author continue to be celebrated. I need to get back to the books I’m currently reading, which I’ve split between Mark Bowden’s “Hue 1968” which tells a story about Vietnam that I knew as a teenager, but not well… and Ronan Farrow’s “War on Peace” which I fear will be prescient about other US-led foreign ventures without adequate support from diplomats and non-lethal advisers.

Mark Seeley

June 2018